This is the code implementation for the paper "DiVeQ: Differentiable Vector Quantization Using the Reparameterization Trick" accepted at ICLR 2026.

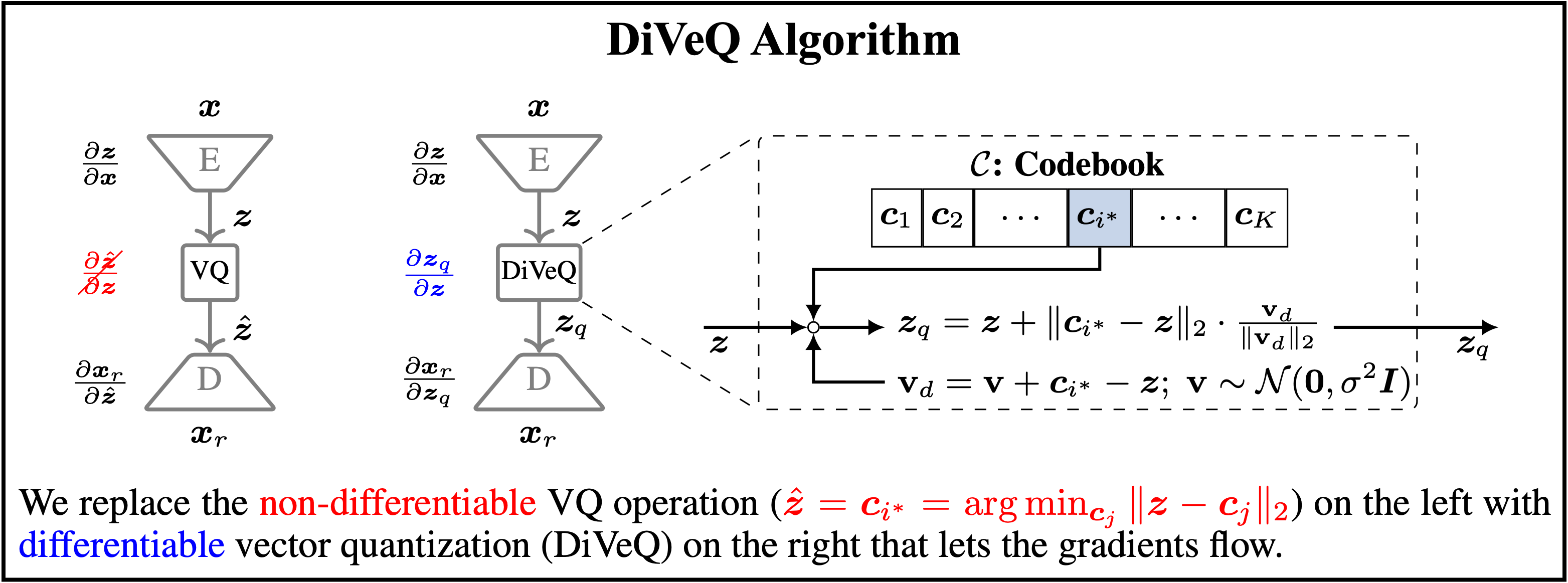

Abstract: Vector quantization is common in deep models, yet its hard assignments block gradients and hinder end-to-end training. We propose DiVeQ, which treats quantization as adding an error vector that mimics the quantization distortion, keeping the forward pass hard while letting gradients flow. We also present a space-filling variant (SF-DiVeQ) that assigns to a curve constructed by the lines connecting codewords, resulting in less quantization error and full codebook usage. Both methods train end-to-end without requiring auxiliary losses or temperature schedules. In VQ-VAE image compression, VQGAN image generation, and DAC speech coding tasks across various data sets, our proposed methods improve reconstruction and sample quality over alternative quantization approaches.

train.py: code to train the VQ-VAE modelmodel.py: code for VQ-VAE encoder and decoderdiveq.py: code to optimize the codebook by DiVeQsf_diveq.py: code to optimize the codebook by SF-DiVeQdiveq_detach.py: code to optimize the codebook by Detach variant of DiVeQsf_diveq_detach.py: code to optimize the codebook by Detach variant of SF-DiVeQresidual_diveq.py: code to optimize the codebook by Residual VQ using DiVeQresidual_sf_diveq.py: code to optimize the codebook by Residual VQ using SF-DiVeQproduct_diveq.py: code to optimize the codebook by Product VQ using DiVeQproduct_sf_diveq.py: code to optimize the codebook by Product VQ using SF-DiVeQvq.py: code to optimize the codebook using other VQ baseline methods

Create the environment by passing the following in your terminal in the following order.

conda create --name vqvae_comp python 3.13.3

conda activate vqvae_comp

pip install -r vqvae_comp_reqs.txttraining_vqgan.py: code to train the VQ-VAE modeltraining_transformer.py: code to train the transformersample_transformer.py: code to generate images from trained VQGANcompute_fid.py: code to compute the FID scoreencoder.py: contains the code for VQ-VAE encoderdecoder.py: contains the code for VQ-VAE decoderdiscriminator.py: contains the code for the discriminator model used for training VQ-VAEvqgan.py: contains the code to build the VQ-VAE model with the encoder, vector quantization, and decodertransformer.py: contains the code to build the transformer modelmingpt.py: contains the code for GPT modelhelper.py: contains some utility blocks used in building the models such as GroupNorm, ResidualBlockutils.py: contains some utility functions like codebook replacementdiveq.py: code to optimize the codebook by DiVeQsf_diveq.py: code to optimize the codebook by SF-DiVeQdiveq_detach.py: code to optimize the codebook by Detach variant of DiVeQsf_diveq_detach.py: code to optimize the codebook by Detach variant of SF-DiVeQvq.py: code to optimize the codebook using other VQ baseline methods

Create the environment by passing the following in your terminal in the following order.

conda create --name vqgan_gen python 3.13.3

conda activate vqgan_gen

pip install -r vqgan_gen_reqs.txt@InProceedings{vali2026diveq,

title={{DiVeQ}: {D}ifferentiable {V}ector {Q}uantization {U}sing the {R}eparameterization {T}rick},

author={Vali, Mohammad Hassan and Bäckström, Tom and Solin, Arno},

booktitle={International Conference on Learning Representations (ICLR)},

year={2026}

}

This software is provided under the MIT License. See the accompanying LICENSE file for details.